What Is a SEO Log File? Guide for AI Bots & GEO (2026)

If you manage a website, your server is quietly recording every single visit from real users and from bots. Those records are stored in your SEO log file. In the age of Generative Engine Optimization (GEO), reading your log file is no longer optional. It tells you exactly which AI bots are crawling your site, what they read, and how often they come back.

This guide explains what an SEO log file is, how to read it, and what the data means for your visibility in AI-powered search engines like ChatGPT, Claude, Perplexity, and Apple Intelligence.

What Is an SEO Log File?

An SEO log file (also called a server log or access log) is a plain text file that your web server creates automatically. Every time someone or something visits your website, the server writes one line to the log. That line records:

- The IP address of the visitor

- The date and time of the request

- The URL that was requested

- The HTTP status code returned (200, 301, 404, etc.)

- The size of the response in bytes

- The referrer URL (where the visitor came from)

- The user agent string (what browser or bot made the request)

Here is an example of a single line from a real log file:

66.249.75.69 - - [28/Feb/2026:19:37:06 +0700] "GET /robots.txt HTTP/1.1" 200 125 "-" "Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html)"That one line tells you that Googlebot visited your robots.txt file on February 28, 2026, and received a successful 200 response. Multiply that by thousands of lines, and you have a complete picture of how every bot and user interacts with your site.

Why SEO Log File Analysis Matters in 2026

Log file analysis has always been a powerful technical SEO tool. But in 2026, it has become critical for a new reason: AI bots are now some of the most active crawlers on the web, and they determine whether your content appears in AI-generated answers.

Traditional SEO tools show you Google rankings. Log file analysis shows you something different it shows you what is actually happening on your server, right now, from every bot that exists. You can see if GPTBot is ignoring your most important pages, if ClaudeBot is reading your content regularly, or if Perplexity is crawling pages you did not even know were indexed.

Without log file analysis, you are flying blind in the GEO era.

What Is GEO and Why Does It Connect to Log Files?

Generative Engine Optimization (GEO) is the practice of optimizing your website so that AI-powered engines like ChatGPT, Claude, Perplexity, and Apple Intelligence include your content in their generated answers. These AI systems use web crawlers, just like Google does, to discover and index content.

Your log file is the only direct evidence that an AI bot has visited your site. If you want to understand your GEO performance, you need to monitor which AI bots are crawling you, how often, and which pages they are reading. Log files give you that data in real time.

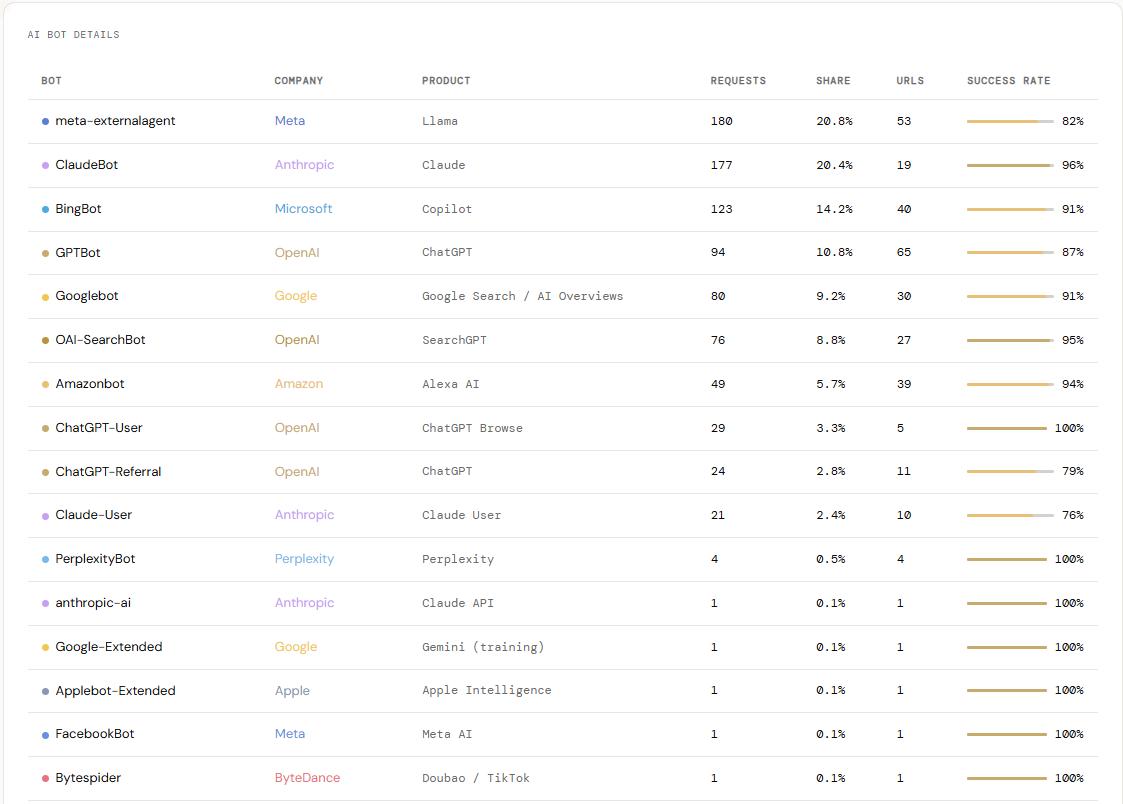

The Most Important AI Bots in Your SEO Log File (2026)

Here are the key AI and search bots you should know how to identify in your server log file.

| Bot | Company | Product |

|---|---|---|

| GPTBot | OpenAI | ChatGPT |

| OAI-SearchBot | OpenAI | SearchGPT |

| ChatGPT-User | OpenAI | ChatGPT Browse |

| ChatGPT-Referral | OpenAI | ChatGPT |

| ClaudeBot | Anthropic | Claude |

| Claude-User | Anthropic | Claude User |

| anthropic-ai | Anthropic | Claude API |

| meta-externalagent | Meta | Llama |

| FacebookBot | Meta | Meta AI |

| cohere-ai | Cohere | Cohere |

| PerplexityBot | Perplexity | Perplexity |

| Amazonbot | Amazon | Alexa AI |

| Applebot-Extended | Apple | Apple Intelligence |

| Bytespider | ByteDance | Doubao / TikTok |

| BingBot | Microsoft | Copilot |

| Googlebot | Google Search / AI Overviews | |

| Google-Extended | Gemini (training) | |

| Gemini-Deep-Research | Gemini Deep Research | |

| Google-Agent ⭐ New | AI Agents |

1. GPTBot (OpenAI)

User agent string: Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; GPTBot/1.3; +https://openai.com/gptbot)

GPTBot crawls content to train ChatGPT’s language models. It focuses on high-quality, topically relevant pages and uses your sitemap to discover content. It does not execute JavaScript, so your most important content should be available in static HTML to ensure GPTBot can read it.

2. OAI-SearchBot (OpenAI)

User agent string: Mozilla/5.0 ... compatible; OAI-SearchBot/1.3; +https://openai.com/searchbot

OAI-SearchBot powers ChatGPT’s real-time search feature. Unlike GPTBot, this bot is looking for current, up-to-date content to include in live ChatGPT answers. It checks robots.txt frequently before crawling, and focuses on expert content it can surface directly in search responses.

3. ChatGPT-User (OpenAI)

User agent string: Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko); compatible; ChatGPT-User/1.0; +https://openai.com/bot

ChatGPT-User is triggered when a real ChatGPT user shares a link inside the app and asks ChatGPT to read it. Each visit from this bot represents a real human interaction, not an automated crawl. Seeing it in your log file is a strong signal that your content is already being used inside active ChatGPT conversations.

4. ChatGPT-Referral (OpenAI)

User agent string: Not a bot. ChatGPT-Referral appears as a referral traffic source in your analytics platform, not in your server log file.

ChatGPT-Referral is recorded in your Google Analytics when ChatGPT includes a link to your page in a generated answer and a real user clicks that link. It shows up as traffic from chatgpt.com or with a utm_source=chatgpt.com parameter. Every ChatGPT-Referral visit means ChatGPT cited your page, a user read that answer, and then clicked through to your site. It is the clearest business signal that your GEO strategy is working.

5. ClaudeBot (Anthropic)

User agent string: Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; ClaudeBot/1.0; +claudebot@anthropic.com)

ClaudeBot is Anthropic’s primary crawler, used to train Claude and power Claude’s web-connected features. It typically starts by reading your robots.txt and sitemap before moving to individual content pages, which shows it respects your site structure and uses it to prioritize what to read. There is also a separate bot called Claude-User, which is triggered when a real Claude user shares a link for real-time reading.

6. PerplexityBot

User agent string: Mozilla/5.0 ... compatible; PerplexityBot/1.0; +https://perplexity.ai/perplexitybot

PerplexityBot crawls for Perplexity AI, one of the fastest-growing AI search engines. Even a small number of visits matter because Perplexity’s citation model means being crawled can lead directly to being cited in AI-generated answers.

7. Meta-ExternalAgent (Facebook / Meta)

User agent string: meta-externalagent/1.1 (+https://developers.facebook.com/docs/sharing/webmasters/crawler)

Meta’s crawler powers Meta AI across Facebook, Instagram, and WhatsApp. It is typically one of the most active bots on any site and is especially focused on XML sitemaps to discover new content quickly.

8. Bingbot (Microsoft)

User agent string: Mozilla/5.0 ... compatible; bingbot/2.0; +http://www.bing.com/bingbot.htm

Bingbot powers both Bing search and Microsoft Copilot. Because Copilot uses Bing’s index for its AI answers, strong Bingbot crawl coverage is a direct factor in your Copilot visibility.

How to Read an SEO Log File: A Step-by-Step Example

Let’s break down a real log line from the sample file:

52.204.89.12 - - [28/Feb/2026:19:47:25 +0700] "GET /wp-json/oembed/1.0/embed?format=xml&url=https://justinha.info.vn/geo/what-is-generative-engine-optimization/ HTTP/1.1" 200 1392 "-" "Mozilla/5.0 AppleWebKit/537.36 (KHTML, like Gecko; compatible; Amazonbot/0.1; ...)"- 52.204.89.12 — The IP address (Amazon AWS range, confirming this is Amazonbot)

- 28/Feb/2026:19:47:25 +0700 — Date and time in Vietnam time zone

- GET /wp-json/oembed/1.0/embed?… — The request was for oEmbed data about a GEO article

- 200 — The server responded successfully

- 1392 — The response was 1,392 bytes

- Amazonbot/0.1 — Amazon’s crawler made this request

This single line tells you that Amazonbot found your GEO article and pulled its structured metadata. That is a positive signal for AI visibility on Amazon and Alexa.

HTTP Status Codes: What They Mean for SEO

When you analyze your log file, pay close attention to the status codes bots receive. Here is what each one means for your SEO health:

- 200 OK — The page loaded successfully. This is what you want for all important pages.

- 301 Moved Permanently — The bot is following a redirect. Too many redirects waste crawl budget.

- 404 Not Found — The bot tried to access a page that does not exist. Fix or redirect these.

- 403 Forbidden — The bot was blocked. Check if you are accidentally blocking good bots.

- 410 Gone — The page was intentionally removed. This is better than a 404 for deleted content.

- 500 Server Error — Your server failed to respond. This is a critical issue for crawl budget.

In the sample log, bots received 971 successful 200 responses, 46 404 errors, and 43 redirects — a healthy distribution overall, but the 404s are worth investigating.

What to Look for in Your SEO Log File

When you open your log file, focus on these four key questions:

- Which AI bots are crawling your site? If GPTBot or ClaudeBot never appears in your logs, your content may be blocked in robots.txt or simply not discovered yet.

- What pages are bots crawling? Are they finding your most important content? Or are they wasting time on low-value URLs?

- What status codes are bots receiving? Bots hitting 404s or 500s is crawl budget wasted.

- How often are bots returning? High-frequency crawling signals that a bot finds your content fresh and valuable.

Common Problems Found in SEO Log Files

Log file analysis reveals issues that traditional SEO tools cannot find. Here are the most common problems:

- Important pages not crawled by AI bots — Your best content may be invisible to GPTBot or ClaudeBot because of robots.txt rules, noindex tags, or simply because bots have not discovered it yet.

- Crawl budget wasted on parameter URLs — URLs like

/?s={search_term_string}or pagination pages can consume crawl budget without adding SEO value. - Redirect chains sending bots in circles — Each 301 redirect costs crawl budget. Chains of two or more redirects are especially wasteful.

- Bot traffic confused with human traffic — Without log file analysis, your analytics may be inflated by bot visits, giving you a false picture of real user engagement.

- Security bots hitting your login page — The sample log showed multiple failed login attempts on wp-login.php. These are wasted server resources and potential security threats.

How to Get Your SEO Log File

The method depends on your hosting setup:

- Apache servers — Log files are usually at

/var/log/apache2/access.logor in your cPanel under “Logs.” - Nginx servers — Check

/var/log/nginx/access.logor your hosting control panel. - cPanel hosting — Go to cPanel → Logs → Raw Access Logs to download your log file.

- LiteSpeed hosting — Check your control panel or contact your hosting provider.

- Plesk hosting — Go to Websites & Domains → Logs.

Log files can get very large very quickly — the sample log covering just two weeks was over 10MB and contained nearly 40,000 lines. To work with them effectively, you need a proper analysis tool.

Analyze Your SEO Log File for Free — Up to 1GB

Traditional log file tools like Screaming Frog’s Log File Analyser cost 99 euros per year. That is a significant investment, especially for small businesses and independent SEOs.

We built a free alternative that handles files up to 1GB — that is up to 5 million rows of log data — with no cost and no software to install. Just upload your file and get instant insights about which bots are crawling your site and what they are finding.

Try the SEO Log File Analyzer Tool — free, browser-based, and designed for SEOs who want to understand their GEO performance without paying for enterprise software.

SEO Log File vs. Google Search Console: What Is the Difference?

Google Search Console shows you data from Google’s perspective. Your log file shows you data from your server’s perspective. They are complementary, not competing tools.

Google Search Console tells you which pages Google has indexed, what keywords you rank for, and when Google crawled your pages. Your log file tells you what every bot — not just Googlebot — did on your server, including bots that Google Search Console never tracks. In the GEO era, that second category (GPTBot, ClaudeBot, Applebot, etc.) is just as important as the first.

SEO Log File and Your robots.txt Strategy

Your robots.txt file controls which bots can crawl which parts of your site. Your log file tells you whether those rules are working as intended. In the sample log, both ClaudeBot and Googlebot checked robots.txt first — 77 and 13 times, respectively — before crawling any content pages.

If you want to allow AI bots to crawl your site, make sure your robots.txt is not blocking them. Here is a basic example that allows all major AI bots:

User-agent: GPTBot

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: OAI-SearchBot

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Applebot

Allow: /

User-agent: Amazonbot

Allow: /After you update robots.txt, your log file will confirm whether bots are respecting the new rules.

Frequently Asked Questions About SEO Log Files

How big can an SEO log file get?

Log files grow quickly on busy sites. A medium-traffic site can generate several gigabytes of log data per month. The sample log covering two weeks was already over 10MB with nearly 40,000 lines. Most standard SEO tools struggle with files this large, which is why having a tool that handles up to 1GB is important.

Do I need log file analysis if I already use Google Search Console?

Yes. Google Search Console only shows data from Googlebot. In 2026, AI bots from OpenAI, Anthropic, Apple, Amazon, and Meta are equally important for your visibility in AI-generated answers. Log files are the only way to track all of them together.

How often should I analyze my log files?

For most sites, a monthly review is enough to catch patterns. If you are actively working on GEO or have recently changed your robots.txt or site structure, check weekly. If you are launching new content you want AI bots to find quickly, check within 48 to 72 hours of publishing.

What is the difference between GPTBot and OAI-SearchBot?

GPTBot crawls content to train OpenAI’s AI models. OAI-SearchBot crawls content to power real-time search results inside ChatGPT. If you want your content to appear in live ChatGPT answers, OAI-SearchBot is the one to watch.

Can I block AI bots from my log file?

Yes, you can block any bot using robots.txt. However, blocking AI bots means your content will not appear in AI-generated answers from those systems. In most cases, allowing reputable AI bots is the better strategy for GEO visibility.

Conclusion

An SEO log file is one of the most powerful and underused tools in technical SEO. In 2026, it is your primary window into how AI bots interact with your website and that interaction directly affects your visibility in ChatGPT, Claude, Perplexity, Apple Intelligence, and other AI-powered platforms.

By learning to read your log file, you can see exactly which AI bots are crawling your site, which pages they are reading, and whether your most important content is being discovered. That knowledge is the foundation of any serious GEO strategy.

Ready to start? Upload your log file to the SEO Log File Analyzer Tool completely free, no sign-up required, and capable of handling files up to 1GB (5 million rows). It replaces Screaming Frog’s Log File Analyser and saves you 99 euros a year.

Every day your brand is absent from AI-generated answers, a competitor earns that recommendation instead. Justin Hà offers GEO AI Branding Service in Vietnam is built to change that with AI Visibility Audits across ChatGPT, Perplexity and Google AI Overviews, LLMs.txt and Schema setup, monthly prompt testing, and off-site authority building on Reddit, LinkedIn and YouTube. Book a free consultation today.