Why AI Bots Crawl Your Site But Never Cite You (And How to Fix It)

Your server log file shows GPTBot, ClaudeBot, and OAI-SearchBot visiting your site regularly. Your SEO log file confirms they are reading your pages and getting successful 200 responses. But when you open ChatGPT or Perplexity and ask questions in your niche, your brand never appears. A competitor with less content and less traffic gets cited instead.

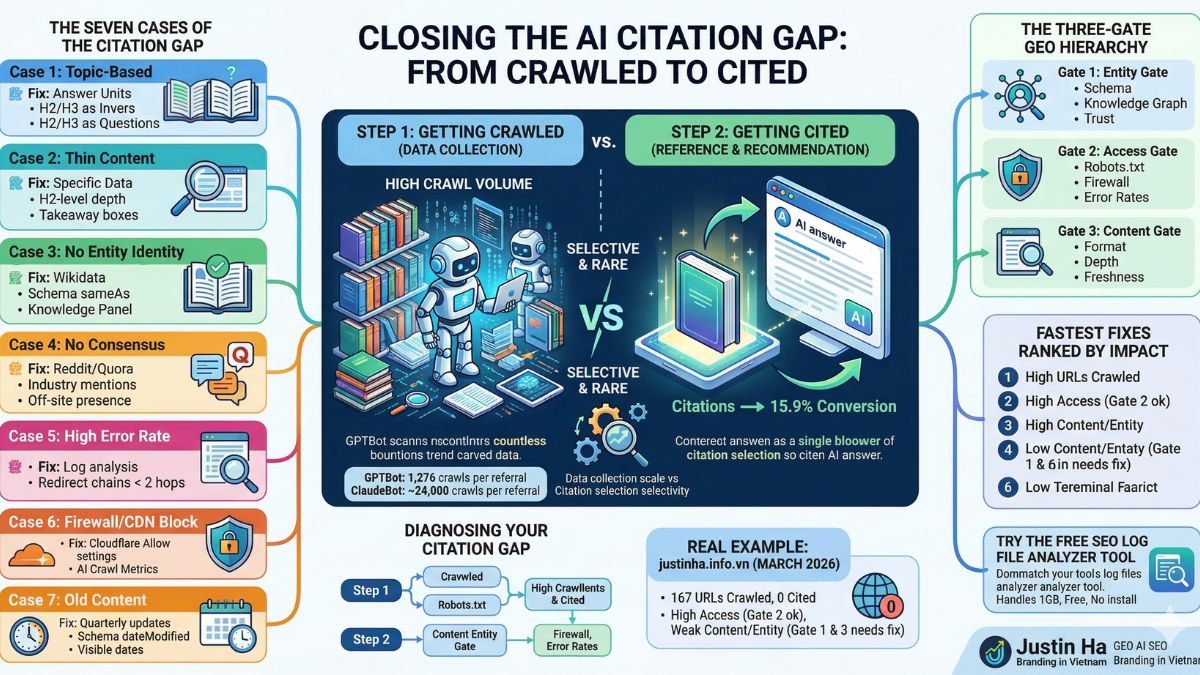

This is called the AI citation gap. It is the most frustrating problem in GEO, and it is more common than most people realize. Being crawled and being cited are two completely separate things. Getting crawled is step one. Getting cited requires passing several additional tests that have nothing to do with how often a bot visits your site.

This guide explains every reason why this happens, broken down into specific cases, and gives you a clear fix for each one.

Understanding the Gap: Crawling vs Citing

When an AI bot crawls your page, it collects the raw text and stores it. That is all. The crawl is just data collection. Citation is a completely separate decision made later, when a user asks a question and the AI model decides which sources are worth referencing in its answer.

Think of it this way. A library researcher might scan thousands of books looking for material. But when they write a report, they only cite the books that gave them a clear, direct, trustworthy answer to their specific question. The other books were read but not cited. The same logic applies to AI systems.

Data from Cloudflare Radar covering January through March 2026 shows that ClaudeBot crawls nearly 24,000 pages for every single referral it sends back to website owners. GPTBot crawls around 1,276 pages per referral. These ratios show that crawling happens at enormous scale, but citation is selective and rare. The gap between crawling and citing is where your GEO strategy needs to focus.

To understand why your site is being crawled but not cited, you need to identify which specific case applies to you. There are seven distinct cases, and each one has a different fix.

Case 1: Your Content Does Not Answer a Specific Question

What Is Happening

AI systems are built around question-answering. When a user types a prompt into ChatGPT or Perplexity, the AI generates a specific answer to a specific question. To do that, it looks for content that directly addresses that exact question. If your pages are written around topics and keywords rather than questions and answers, the AI cannot extract a clean, citable answer from your content even if it has read every word.

Most websites are written for traditional SEO. The content might be thorough and well-organized, but it is structured around topics like “Our SEO Services” or “About Generative Engine Optimization” rather than direct questions like “What is generative engine optimization?” or “How long does it take to see results from GEO?” Those two formats look similar to a human reader but are completely different to an AI model trying to extract a citation-ready answer.

How to Fix It

Rewrite your section headers as real questions, not topic labels. Every H2 and H3 should be phrased the way a person would actually ask an AI. Then make the first sentence under each header a direct, complete answer to that question. The full explanation and context can follow in the paragraph below, but the first sentence must stand alone as a usable answer.

This structure is called an answer unit. It has three parts: a direct claim in the first sentence, supporting detail or evidence in the body, and a clear takeaway at the end of the section. AI systems can extract answer units cleanly from structured content. They struggle with content that buries the point in the middle of a long paragraph.

For example, instead of a header that says “GEO Strategy Benefits,” write “What are the benefits of a GEO strategy?” Then open the section with: “A GEO strategy helps your brand appear in AI-generated answers across ChatGPT, Perplexity, and Google AI Overviews, which now reach over 800 million weekly users.” That is a complete, citable answer in one sentence.

Case 2: Your Content Is Too Thin to Establish Authority

What Is Happening

AI systems are built to cite comprehensive, authoritative sources. Surface-level content that covers a topic briefly without going deep does not meet the threshold for citation. Research on citation patterns in 2026 shows that structured pages with real depth are 2.8 times more likely to be cited than pages with thin content. AI models prefer sources that clearly demonstrate expertise on a subject, not pages that mention it in passing.

Thin content looks different depending on the type of page. A service page that lists what you offer without explaining how, why, or with what results is thin. A blog post that answers a question in three paragraphs without providing data, specifics, or actionable detail is thin. Even a long article can be thin if it repeats the same ideas without adding new information or evidence.

How to Fix It

Add specific, precise data to every key claim. “The average citation rate is 15%” gets cited more often than “the citation rate is about 15%.” Precision signals to AI systems that you have done real research, not just written general statements. Name your sources when you use statistics. Claims backed by named, verifiable sources have higher citation rates than unsourced claims.

Go deeper on each topic than you think you need to. AI systems do not reward word count, but they do reward completeness. A 600-word article that answers a question fully, with specific data and clear structure, will outperform a 2,000-word article that circles the same point without resolution. Ask yourself: after reading this section, does the reader have everything they need to act on this information? If not, it is not deep enough for AI citation.

Add a summary box or “Key Takeaway” block at the top or bottom of important sections. AI models extract these easily and use them as citation-ready snippets.

Case 3: Your Brand Has No Entity Identity in AI Knowledge Graphs

What Is Happening

This is the least intuitive case, and it is one of the most common reasons why sites get crawled but never cited. AI language models do not just read your website. They try to map your brand to a stable entry in their internal knowledge graph. A knowledge graph is how an AI understands what an entity is: its name, what it does, who is behind it, and where it appears across the web.

If your brand does not have a clear, consistent identity signal across multiple trusted sources, the AI model cannot reliably confirm that your brand is a real, trustworthy entity. Without that confirmation, it will not cite you, even if your content is excellent. This is the entity gate. Your content must pass the entity gate before the AI even considers whether your content quality is good enough to cite.

One documented case showed that a brand’s name overlapped with a common English word. AI models kept confusing the brand with the word in general usage. Adding a sameAs link in the schema markup, connecting the brand to its Wikidata entry, resolved the confusion within three weeks. Citations followed shortly after.

How to Fix It

Set up or claim your Wikidata entry for your brand or key individuals associated with it. Add Organization schema markup to your website with the sameAs property linking to your Wikidata page, LinkedIn company page, and Crunchbase profile. This gives AI models a stable, verifiable identifier to anchor your brand to.

Create or optimize your Google Knowledge Panel. This is one of the clearest signals to AI systems that your brand is a real, verified entity. If you do not have a Knowledge Panel, work toward one through consistent brand mentions across trusted sources, consistent NAP (name, address, phone) data, and structured entity signals on your site.

Make sure your About page, author bios, and homepage clearly state who you are, what you do, and what makes you credible. Use specific details: years of experience, number of projects, geographic markets served, notable clients or results. Vague brand descriptions are entity signals that AI models cannot reliably interpret.

Case 4: Your Content Has No Consensus Signals from Other Sources

What Is Happening

AI models do not just look at your website in isolation. They synthesize signals from across the web. Research on GEO citation patterns shows that domains with active profiles on platforms like G2, Capterra, Trustpilot, or Yelp have approximately three times higher chances of being cited. Domains with significant mention activity on Reddit and Quora have roughly four times higher citation chances compared to domains that exist only on their own website.

The reason is consensus. When multiple independent sources mention, recommend, or discuss your brand, AI models interpret that activity as a trust signal. A single website saying it is the best at something is noise. Multiple independent sources saying the same thing is consensus. AI systems are trained to cite sources that have broad consensus behind them, not sources that stand alone.

This explains why Wikipedia gets cited so frequently in AI answers. It has extraordinary consensus signals: millions of editors, thousands of references, and mentions across every corner of the internet. Your site does not need to reach that level, but it needs some level of external validation beyond its own content.

How to Fix It

Build your brand presence on the platforms that AI systems actively learn from. Reddit is particularly important: thoughtful, well-supported contributions to relevant subreddits drive citation likelihood much more than quick comments. Focus on subreddits where your target audience asks questions in your niche. Answer in depth, without promotional language, and link to your content only when it genuinely helps the person asking.

LinkedIn articles and YouTube videos that cover your area of expertise create additional consensus signals. When an AI model sees your name associated with a topic across your website, LinkedIn, YouTube, Reddit, and a third-party review platform, it has enough consensus to trust that you are a genuine authority in that space.

Get your brand mentioned in industry publications, even small ones. Guest articles, expert quotes, and case study mentions all create off-site entity signals. A single mention in a credible publication does more for your AI citation rate than ten new pages on your own site.

Case 5: Your Site Has a High Error Rate That Blocks AI Access

What Is Happening

Your log file may show AI bots crawling your site, but a significant share of those requests may be returning errors. If a bot visits a page and receives a 404 error, it cannot read the content. If it receives a 500 server error, the page is completely inaccessible. If it follows a long redirect chain, it may time out before reaching the final page.

In a real log file analyzed for this report, 13% of all requests returned errors, above the 10% danger threshold. For AI bots specifically, 10% of AI requests returned errors, meaning approximately 87 AI requests failed across a 15-day period. Each failed request is a page that the bot could not read, index, or potentially cite. The bot shows up in your log file as a visit, but it left with nothing useful.

The problem compounds over time. AI bots that repeatedly encounter errors on a site reduce their crawl frequency. A site that reliably returns clean 200 responses gets crawled more often and more deeply than a site with frequent errors. Error rates directly affect how much useful content AI bots can collect from your site.

How to Fix It

Filter your log file to show only AI bot user agent strings combined with 4xx and 5xx status codes. This gives you the exact list of pages where AI bots are failing. Sort them by frequency. Fix the highest-frequency errors first.

For 404 errors, add a 301 redirect pointing to the closest relevant live page. For deleted content with no equivalent replacement, return a 410 Gone response, which tells bots the page is intentionally removed and they should stop trying. For 500 server errors, check your server error logs for the specific cause: database timeouts, memory limits, or plugin conflicts are common on WordPress sites.

For redirect chains longer than two hops, flatten them to go directly from the original URL to the final destination. A chain of three or more redirects significantly reduces the chance that AI bots complete the journey to the target page.

Case 6: Your Content Is Blocked by a Firewall or CDN

What Is Happening

This case is invisible in your log file, which is what makes it so dangerous. If your CDN or web application firewall is blocking AI bot requests before they reach your server, those blocked requests never appear in your server logs at all. You see no record of the block. From your perspective, the bot is simply not visiting. From the bot’s perspective, your site is inaccessible.

Cloudflare changed its default configuration in 2024 to block AI bots. Many WordPress site owners using Cloudflare had their AI bot access silently cut off without realizing it. The robots.txt file looked correct and open. The site appeared healthy. But AI bots were receiving 403 Forbidden responses at the CDN level and never reaching the origin server.

There are three gates between an AI bot and your content: the robots.txt gate, the firewall or CDN gate, and the indexing gate (noindex tags, canonical mismatches). Most SEOs only check the first gate. The second and third gates are where silent blocking happens most often.

How to Fix It

Log into your Cloudflare dashboard and navigate to Security, then Bots, then AI Scrapers and Crawlers. Make sure this setting is set to Allow, not Block. Cloudflare also has an AI Crawl Metrics page that shows which AI bots have attempted to access your site and how many were blocked. Check this first.

If you use a different CDN or WAF (AWS CloudFront, Sucuri, Fastly), check your IP allowlist and bot management rules. Compare the known IP ranges published by OpenAI, Anthropic, and Google against your firewall rules to confirm none of them are being blocked.

After making changes, wait 24 to 48 hours and then check your server logs for the appearance of bot user agent strings that were previously missing. If OAI-SearchBot suddenly appears in your logs after being absent, the firewall was the problem.

Case 7: Your Content Is Too Old or Has Not Been Updated Recently

What Is Happening

AI systems have a strong bias toward recent content. Research shows that citations in AI responses average about 26% newer than those in traditional search results. Data from 2026 shows that 79% of AI bots mainly index content from the past two years, and 65% focus on content from the current year. Content older than three months sees significantly fewer citations than freshly updated content on the same topic.

This creates a specific problem for sites with large archives of older content. The pages may have been crawled many times and may contain genuinely useful information. But if the publication date or last-modified date signals that the content is old, AI systems will prioritize newer sources over it when choosing what to cite.

This is especially relevant for data-heavy content. A statistic published in 2023 will lose citation priority to the same statistic updated with 2026 data, even if the underlying information is similar. AI models treat freshness as a proxy for reliability: if you have not updated the page, you may not be actively maintaining its accuracy.

How to Fix It

Set up a quarterly content review schedule for your most important pages. Update statistics to the most recent available data. Add a visible “Last Updated” date to the top of each article and make sure your schema markup includes the dateModified property. AI systems read schema data and use it to assess content freshness.

Prioritize updating the pages that are already being crawled frequently by AI bots. Your log file shows you which pages bots revisit most often. These are the pages AI considers most relevant to your topics. Updating them with fresh data and additional depth gives the bot new, citation-ready material each time it returns.

Do not just change a date without adding real new content. AI systems can distinguish between genuine updates and cosmetic date changes. Add at least one new data point, example, or section to justify the updated date.

The Three-Level GEO Hierarchy You Must Pass Before Getting Cited

Understanding all seven cases together reveals a structure. Getting cited by AI is not one problem. It is a sequence of three gates, and you must pass all three before citation becomes possible.

- Gate 1 — Entity Gate: Can the AI identify your brand as a real, trustworthy entity? This covers your schema markup, knowledge graph signals, off-site mentions, and consistent brand identity across the web. Cases 3 and 4 above both belong to this gate. If you fail the entity gate, the AI will not look at your content quality at all.

- Gate 2 — Access Gate: Can the AI actually read your pages? This covers robots.txt, firewall rules, CDN settings, JavaScript rendering, error rates, and redirect chains. Cases 5 and 6 both belong to this gate. You can have perfect content and a strong entity but still never get cited if the access gate is closed.

- Gate 3 — Content Gate: Is your content structured and deep enough for AI to extract a clean, citable answer? This covers answer-first formatting, content depth, freshness, and structured data. Cases 1, 2, and 7 all belong to this gate.

Most GEO practitioners focus almost entirely on Gate 3. They rewrite content, add FAQs, and optimize structure. But if Gate 1 or Gate 2 is failing, all of that work produces zero citations. Start by confirming Gates 1 and 2 are open before investing heavily in content optimization.

How to Diagnose Your Own Citation Gap

Before you start fixing anything, you need to know which case or combination of cases applies to your site. Here is how to diagnose each one.

Step 1: Check Your Log File for AI Bot Activity

Open your server log file and search for AI bot user agent strings: GPTBot, OAI-SearchBot, ClaudeBot, meta-externalagent, PerplexityBot, ChatGPT-User. If these bots appear with 200 status codes, Gates 1 and 2 are at least partially open. If they appear mostly with 4xx or 5xx codes, Gate 2 has a problem. If they do not appear at all, check your firewall and CDN settings first.

Use the free SEO Log File Analyzer Tool to get a full breakdown instantly. Upload your log file and you will see every AI bot’s request count, success rate, and the specific URLs they visited and the status codes they received. The tool handles files up to 1GB, covering around 5 million rows, completely free with no sign-up required. It replaces Screaming Frog’s Log File Analyser and saves you 99 euros per year.

Step 2: Test Your AI Citation Rate Directly

Open ChatGPT, Perplexity, Claude, and Google AI Overviews. Type 10 to 20 questions that your target audience would ask in your niche. Note which sources get cited in the answers. Note whether your domain appears anywhere. This gives you a baseline: you are either being cited or you are not, and you can see exactly which competitors are winning the citations you should be getting.

If competitors with similar or weaker content are being cited and you are not, the problem is almost certainly Gate 1 (entity signals) or Gate 2 (access). If no one in your niche is being cited consistently, the problem is likely Gate 3 (content format and depth).

Step 3: Check Your Entity Signals

Search for your brand name directly in ChatGPT and Claude. Ask “What do you know about [your brand]?” If the AI gives a vague or incorrect answer, or says it has no information, your entity gate is not passing. If the AI describes your brand accurately, your entity signals are working.

Check whether you have an active Wikidata entry, a Google Knowledge Panel, and Organization schema with sameAs links on your homepage. These are the three most reliable entity signals for AI citation systems.

Step 4: Audit Your Content Structure

Take your five most important pages and test each one against the answer unit format. Does each section start with a direct, complete answer to a specific question? Does each page contain at least one piece of specific, named, cited data? Are the headers phrased as questions? Is there a summary or key takeaways section?

If the answer to most of these is no, Gate 3 is your priority after confirming Gates 1 and 2 are working.

A Real Example: 167 URLs Crawled, 0 Cited

The GEO Log Analyzer report for justinha.info.vn from March 2026 shows a GEO Score of 85 out of 100, Grade A. AI bots crawled 167 unique URLs with a 90% success rate. The crawl trend was growing at 362% week-over-week. And yet the report shows a crawl-to-citation ratio of 167 crawled URLs to 0 cited URLs. 24 AI referral visits arrived via analytics, but the citation rate from those visits was 0%.

This is a textbook example of a Gate 3 problem on top of a strong Gate 2 performance. The bots can access the site easily. The success rate is high. The bots are returning frequently. Gate 2 is open. But the content structure and entity signals are not yet strong enough to push over the citation threshold. The fix is not more crawl access. The fix is answer-first content restructuring, deeper content on the /geo/ section (which only received 3% of AI traffic despite being the most important section), and stronger off-site entity signals.

This exact situation applies to hundreds of thousands of websites in 2026. The log file looks healthy. The bots are active. But citations are zero or near-zero because the content is not structured for extraction.

The Fastest Fixes Ranked by Impact

If you need to prioritize, here is the order that produces results the fastest:

- Priority 1 — Fix firewall and CDN blocking (Gate 2): This is the fastest win. If Cloudflare or a WAF is silently blocking AI bots, fixing it takes minutes and immediately opens your site to crawling and citation consideration. Check this before anything else.

- Priority 2 — Fix 404 and 500 errors for AI bots (Gate 2): Each error is a page the bot cannot read. Fix the highest-frequency errors first. This takes one to two days of work and can have immediate impact on crawl success rates.

- Priority 3 — Add Organization schema with sameAs (Gate 1): This is a one-time technical task that strengthens your entity signals permanently. Add it to your homepage. Link to Wikidata, LinkedIn, and Crunchbase. This takes a few hours and the impact compounds over time.

- Priority 4 — Restructure your top 5 pages with answer-first format (Gate 3): Rewrite headers as questions. Add answer units. This typically takes one to two weeks of content work and produces citation results within four to eight weeks.

- Priority 5 — Build off-site entity signals (Gate 1): Start contributing to Reddit threads in your niche. Publish expert content on LinkedIn. Get mentioned in at least one industry publication per month. This is slower work with a two to three month time horizon, but it builds the consensus signals that make AI systems confident enough to cite you.

- Priority 6 — Update stale content with fresh data (Gate 3): Audit your most-crawled pages and identify which ones have not been updated in the past three months. Update statistics, add new examples, and refresh the dateModified in your schema. This is ongoing work.

From Citation Gap to Full GEO Visibility

Closing the AI citation gap is a structured process, not a single fix. It requires passing three gates in sequence: entity, access, and content. Most sites fail at one of these gates without knowing which one. The log file tells you about Gate 2. Direct AI testing tells you about Gate 1. Content auditing tells you about Gate 3.

The good news is that once you identify the specific case that applies to your site, the fix is usually clear and actionable. You do not need to rebuild your entire content strategy. You need to identify the bottleneck and address it directly.

You now know the three gates. You know which cases to check. The next step is knowing exactly which gate is failing on your site and what to do about it first. That is what the GEO AI SEO Branding in Vietnam service does: a full three-gate audit covering entity signals, technical access, and content structure, tested across ChatGPT, Perplexity, Google AI Overviews, and six other AI platforms, followed by a prioritized fix plan and monthly prompt testing to measure your citation rate over time.

Frequently Asked Questions

How long does it take to get cited after fixing the issues?

It depends on which gate you are fixing. Firewall fixes can produce results within 24 to 48 hours once bots start crawling successfully. Entity signal fixes through schema markup typically show results within two to four weeks. Content restructuring typically produces citation results within four to eight weeks for search-based AI tools like Perplexity and OAI-SearchBot. Training-based citation improvement in ChatGPT’s base model takes longer because it depends on model update cycles.

Does having more pages crawled increase citation chances?

Not directly. A site with 20 well-structured, authoritative pages that pass all three gates will get cited more often than a site with 500 thin pages that fail Gate 3. Citation is about quality and structure, not crawl volume. However, more pages that each pass all three gates does increase your total citation surface area across more topics and query types.

Does robots.txt need to explicitly allow each AI bot?

No. If your robots.txt does not mention a specific bot, it is allowed by default. Explicit Allow rules are only necessary if you have a broad Disallow rule that would otherwise block everything. The most important robots.txt check is making sure you have not accidentally blocked AI bots with a rule intended for something else, like blocking all bots from your admin area.

Why is PerplexityBot crawling my site but Perplexity never cites me?

PerplexityBot crawls broadly to build its index. Citation in Perplexity answers requires passing all three gates, but Perplexity places especially high weight on content that directly answers the specific query and on sources that have external authority signals. Perplexity is also known to prioritize sources that appear in Reddit discussions related to the query topic. If your content is not being discussed externally, Perplexity may crawl you but choose better-validated sources for its citations.

What is the minimum content depth needed to get cited?

There is no word count minimum. Research consistently shows that a 500 to 600 word article with perfect answer-unit structure, specific data, and clear attribution can outperform a 3,000 word article with vague, unstructured content. The question to ask is: does this section give a complete, specific, citable answer to a real question? If yes, the length is sufficient. If not, add depth regardless of word count.

Conclusion

Being crawled by AI bots is step one. Being cited by AI systems requires passing three separate gates: your brand must be recognized as a trusted entity, your content must be technically accessible, and your content structure must be optimized for AI extraction. Most sites fail at one of these gates without realizing which one.

Start with your log file. It tells you whether Gate 2 is working. Then test your AI citation rate directly to understand whether Gate 1 is passing. Then audit your content structure against the answer-unit format to evaluate Gate 3. Use the free SEO Log File Analyzer Tool to get your full AI bot breakdown in minutes: upload your log file, see every bot’s success rate and error pattern, and start diagnosing your citation gap with real data. No sign-up required, no software to install, handles files up to 1GB.